Disclaimer: Everything described here is pure imagination and any resemblance to reality is coincidental. This document is intended for security professionals to develop defensive countermeasures. The author is not responsible for the consequences of any action taken based on the information provided in this article. I keep every scenario at the threat-vector level: no operational detail, no tactics, no weapons information, and each one is paired with a defensive recommendation.

Note: As with the rest of the SRF-IWS series, I leaned on several AI models to help build realistic, defense-oriented attack scenarios. The goal is Blue Team planning, nothing else.

A note on the series. This article belongs to SRF-IWS, but it is not a continuation of the Davos articles. Those (Davos 2024, 2025 and 2026) are their own line of analysis on the World Economic Forum; this one stands on its own and simply shares the same framework. I do reference them throughout for context, so they are worth reading as background. The difference this time is the protectee: instead of a corporate forum, we are looking at a head of faith and head of the Vatican state, out in the open, in the middle of a European capital, surrounded by more than a million people.

Introduction

From 6 to 9 June 2026, Pope Leo XIV, the first North American pontiff, will be in Madrid as the opening leg of his apostolic journey to Spain (Madrid, Barcelona and the Canary Islands, 6 to 12 June). It is the first papal visit to the Spanish capital in fifteen years, since Benedict XVI and World Youth Day back in 2011. The Madrid program is dense, and from a protective-intelligence point of view it is wide open:

- Arrival on 6 June, with a courtesy visit to King Felipe VI, Queen Letizia and the Royal Family.

- A youth prayer vigil at Plaza de Lima, on the Paseo de la Castellana, that same evening.

- On Sunday 7 June, the solemnity of Corpus Christi, an open-air Mass at Plaza de Cibeles followed by a Eucharistic procession through the centre of Madrid.

- On Monday 8 June, an address to Parliament at the Congress of Deputies, and later an encounter with the diocesan community at the Santiago Bernabéu.

- Popemobile and motorcade movements concentrated on the Castellana–Cibeles–Lima axis and the fixed nodes: Barajas, the Royal Palace, Congress and the Bernabéu.

The address to Parliament deserves its own line, because it is genuinely historic. For the first time ever, a Pope will speak before a joint session of the Cortes Generales, deputies and senators together. John Paul II came to Spain five times and Benedict XVI three, and none of them ever addressed the chamber. That is the kind of high-symbolism, high-protocol moment an adversary loves.

Spanish and municipal authorities have put together a security and mobility operation without precedent in the city, with attendance across the main events projected at up to 1.8 million people. The chosen motto, “Alzad la mirada” / “Lift up your eyes” (John 4:35), and Leo XIV’s emphasis on migration, the journey ends in the Canary Islands, Spain’s main Atlantic entry point for migrants, turn this into more than a physical-security problem. It is a near-perfect information-warfare target: globally televised, built around a polarising subject, with a protectee whose every sentence carries geopolitical weight.

A Pope is not a Davos delegate, and the threat aperture is much wider. You have religiously motivated extremists, both jihadist and anti-Catholic; traditionalist and sedevacantist fringe actors; anti-clerical and anarchist currents; anti-migration extremists reacting to the Pope’s message; grievance-driven lone actors; and nation-state information operations looking to weaponise the spectacle. None of this is hypothetical. Pontiffs have always been targets. John Paul II was shot in St. Peter’s Square in 1981. He was attacked again in 1982 at Fátima, with a bayonet, by a Spanish priest, Juan María Fernández y Krohn. The 1995 Bojinka plot in Manila included a plan to assassinate him. These are documented facts, and they are reason enough to plan seriously.

What follows are realistic, defense-oriented scenarios across the information, cyber, RF, drone, crowd and physical domains. Each one pairs the attack with its own defense, in the same section.

1. Disinformation and the migration narrative

The most likely and most damaging vector here is not a bomb or a rifle. It is information. Leo XIV’s visit is framed around migration and lands in the middle of an active Spanish immigration debate, which is exactly the kind of ground influence operations like to work on, whether they come from a state actor trying to inflame Spanish and EU fault lines or from domestic extremists on either end.

The campaign I would expect looks something like this. Fabricated papal “quotes”, AI-generated text, images and short clips that put inflammatory positions in the Pope’s mouth on immigration, the Spanish government, Catalonia or the monarchy, dropped a few hours before a key event to own the news cycle. Doctored homily fragments, audio or video from the Cibeles Mass or the Parliament speech, selectively cut or fully faked to manufacture outrage in either direction and pull people toward the venues to confront each other. Forged “leaks”, fake Vatican or Moncloa documents alleging secret political deals tied to the visit, designed to make both Church and state look like they are hiding something. Astroturfed outrage from inauthentic networks pushing divisive hashtags, fake eyewitness accounts and false reports of incidents to either scare people away or provoke a confrontation. And the simplest one, spoofed accounts and look-alike domains copying the official registration and information sites to hand out fake schedules, fake “cancellations” or malicious links.

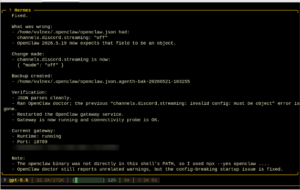

Figure 1 — Disinformation and migration-narrative attack tree, generated with USecVisLib.

Defense

This has to be treated as a primary security function, not a press afterthought. That means a joint Vatican–Spanish communications cell with the authority to rebut fast, official audio and video signed at the source (C2PA-style provenance), an active pipeline to monitor and take down look-alike domains, and one verified channel the public knows to trust. If there is a single authoritative source, most of the forgeries lose their oxygen.

2. Deepfakes and synthetic media

I covered this at length in the Davos 2026 analysis, and nothing about it has gotten easier to defend against. Real-time deepfakes are mature, voice cloning needs only a few seconds of audio, and people only spot a good video deepfake a fraction of the time. A globally broadcast Pope, with an enormous public archive of audio and video, is about as good a training subject as exists. So is the King, and so are the senior organisers.

The scenarios that worry me are the ones that spoof authority. A faked “official” evacuation announcement, or a “device found” warning, pushed onto a compromised PA system, hijacked digital signage or a spoofed alert channel at Cibeles, Lima or the Bernabéu, with the aim of triggering a panic (see section 3). Voice-cloned traffic impersonating an incident commander or a Vatican advance team to redirect units, shift motorcade timing or open a gap. Synthetic “private” recordings of the Pope and the King, or the Pope and government officials, inventing commitments or insults that were never said, released to poison the diplomacy of the visit. Or fabricated “behind the scenes” footage timed to step on the Parliament address.

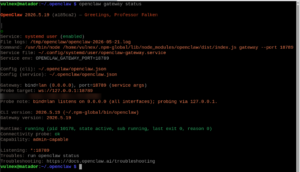

Figure 2 — Deepfake and synthetic-media attack tree (USecVisLib).

Defense

The defensive answer is old-fashioned and it works: out-of-band verification and challenge/response for all command, advance-team and protocol communications. No unit acts on a voice or a face alone. On top of that, run deepfake detection on the monitored broadcast feeds, lock down PA, signage and alerting as critical infrastructure with real authentication, and pre-script the crowd messaging so that anything the public hears comes only through verified, redundant channels.

3. The crowd as the weapon

With up to 1.8 million people spread across Cibeles, Lima, the procession route and the Bernabéu, the highest-probability mass-casualty outcome needs no weapon at all. You only have to engineer panic in a dense crowd. This is the most underappreciated vector on the list, and it is not theoretical, the history is long and grim: Hillsborough, the Love Parade in 2010, the 2015 Mina crush during the Hajj, Itaewon in 2022, Astroworld in 2021.

How would you do it. Start a synchronised false alarm, a rumour of gunfire, a “bomb”, a fire, spread by SMS and social media, a single staged loud bang, or hijacked signage, and place it at a bottleneck where density is already critical: the narrow approaches to Cibeles or Lima, a stadium concourse. Pair it with comms denial, jam or saturate cellular and Wi-Fi so the crowd cannot orient itself and official messaging cannot get through, and let rumour fill the gap (this ties into section 7). Add flow manipulation, block or falsely sign the exits, and a controllable density turns into a progressive collapse. And if you want to overwhelm the response, initiate at several separated points at once so stewarding and emergency services fragment.

Figure 3 — Engineered-panic and crowd-crush attack tree (USecVisLib).

Defense

Defending it comes down to seeing density in real time and being able to act on it. Overhead optical and thermal monitoring plus anonymised mobile-density analytics, with hard thresholds and pre-planned metering and reversible flow control. A public-address system that resists jamming. Stewards rehearsed to kill rumours on the spot. Egress that is engineered, clearly marked and over-provisioned. And one unified incident-command picture, so a small local event never gets the chance to cascade.

4. Drones and counter-UAS

Open venues like Cibeles, Lima, the procession route and the open bowl of the Bernabéu are exactly the places small drones exploit. The cost problem I described in the Davos 2026 analysis still holds: the drones are cheap, the defenses are expensive, and a swarm can simply saturate point defenses.

The uses are familiar. Surveillance and targeting, small quadcopters mapping security positions, motorcade timing and VIP locations in real time. Panic-payload delivery, a drone dispersing smoke, an irritant or pyrotechnics over a dense crowd, where the point is panic and a crush rather than direct casualties. Swarm saturation and decoys, expendable drones soaking up the counter-UAS effort while a primary platform finishes its job, or FPV drones using the urban canyons for a low, fast approach. And RF payloads, airborne jammers or IMSI-catchers degrading comms and collecting intelligence over the crowd.

Figure 4 — Drone and counter-UAS attack tree (USecVisLib).

Defense

The defense has to be layered and multi-modal, radar plus RF plus acoustic plus electro-optical/infrared, so no single trick blinds it. Enforce the no-fly and temporary flight restriction zones with the legal authority to actually do something about a violation. Pre-position effectors on the likely approach lines. And, this matters more here than at Davos, choose mitigation that does not itself hurt or panic a 1.8 million-person crowd. Detection, RF takeover and geofencing, and controlled interception come well before anything kinetic over people’s heads.

5. The motorcade and the Popemobile

Movements concentrate on a predictable axis, Castellana–Cibeles–Lima, and on fixed arrival and departure nodes: Barajas, the Royal Palace, Congress, the Bernabéu. Predictability plus a slow, open, rope-line Popemobile is the classic protective dilemma, and there is no clever way around it.

The exploitation paths are well understood. Choke-point operations, surveillance picks a fixed slow point for a hostile act, a staged disturbance or comms denial. GPS spoofing or jamming of the escort vehicles to fragment the motorcade or misdirect support and medical units; Iran’s capture of a U.S. RQ-170 drone is the textbook precedent for spoofing GNSS on even an advanced platform. Vehicle-as-weapon, the most-rehearsed European threat since Nice and Berlin in 2016, a hostile vehicle driven into a pedestrian-dense stretch of the route. And plain old hostile reconnaissance of static posts and timings beforehand.

Figure 5 — Motorcade and Popemobile attack tree (USecVisLib).

Defense

Defending the move means randomising route and timing wherever the program allows it, putting hostile-vehicle mitigation, barriers, sterile zones, controlled crossings, along the entire crowd-facing axis, and giving the escort vehicles anti-spoof, multi-constellation GNSS with inertial backup. Add aggressive counter-surveillance, dominate the rooftops and elevated positions with friendly observation and counter-sniper coverage, and configure the Popemobile to balance pastoral visibility against protection. It will always be a compromise; it should at least be a deliberate one.

6. Cyber attacks on the event and the city

The visit runs on a lot of software. A mass public registration system holding the personal data of potentially millions, accreditation and badging, ticketing, CCTV and access control, Madrid’s traffic and mobility management, emergency dispatch. As the GTG-1002 case from the Davos 2026 analysis showed, AI agents can map and exploit an ecosystem like this at machine speed, finding paths a human would miss.

The obvious moves: breach the registration system and weaponise the data, exfiltrate attendee records for targeting, doxxing or spear-phishing, or corrupt the access lists to create chaos at the gates. Forge credentials by compromising the accreditation pipeline, and manufacture insider access in a press, volunteer or contractor role. Blind the surveillance, manipulate CCTV and access control to open timed blind spots. Hit the city systems, traffic management and signage during motorcade windows, or emergency dispatch during an incident, which is how a cyber event becomes a physical-safety event. And the simplest, DDoS or deface the official information channels at the moment public attention peaks, which loops straight back to section 1.

Figure 6 — Cyber attacks on event and city systems, attack tree (USecVisLib).

Defense

The defense is unglamorous and necessary: red-team every event and city system in scope before the visit, segment the life-safety and access-control systems so they are not reachable from everything else, run Zero Standing Privilege and Just-in-Time access so a stolen credential buys very little, put integrity monitoring on the accreditation and access lists, and make sure every life-safety function has a tested manual fallback for the day the software lies to you.

7. RF and the spectrum

This is my home ground and it is a high-impact one. In Spain, the state security forces, Policía Nacional and Guardia Civil, run on SIRDEE, the encrypted, nationwide TETRAPOL trunked network. (A point worth getting right: SIRDEE is TETRAPOL, not TETRA. TETRA is a different standard used by various regional and municipal services. People conflate the two constantly.) Whatever the technology, the whole event depends on resilient spectrum.

The attacks. Jam SIRDEE, the event-coordination radios and the cellular bands at a critical moment, which degrades command, amplifies crowd confusion (section 3) and isolates posts. Spoof GPS/GNSS to corrupt timing, geofencing, counter-UAS tracking and motorcade navigation (section 5). Deploy IMSI-catchers or rogue cells to track and intercept VIPs and the crowd. Stand up rogue access points near venues and command areas to capture traffic and pivot, including the “harvest now, decrypt later” collection I described in the Davos 2026 analysis.

Figure 7 — RF and wireless-warfare attack tree (USecVisLib).

Defense

Defending the spectrum means watching it. Continuous monitoring and direction-finding across the operational area to catch jammers, spoofers and IMSI-catchers as they appear. Encrypted, frequency-hopping, jam-resistant primary comms, with a non-RF fallback, runners and hardwired nodes, for when the band goes dark. GNSS integrity monitoring with backup positioning. And basic RF hygiene, nothing sensitive over a channel that can be compromised.

8. Insiders and the supply chain

A visit like this mobilises a huge, hastily assembled workforce. The official choir alone, the Gran Coro de Voces Católicas, has more than 1,700 volunteers, and that is before you count stewards, contractors, catering, AV, transport and security vendors across every venue. The weakest-link problem scales with that footprint.

What I would watch for: a volunteer or contractor infiltrated where mass onboarding outruns vetting. A pre-compromise of the AV and technical kit at the Congress chamber, the Royal Palace or the Bernabéu, an implanted listening or recording device, or a manipulated production system feeding the disinformation and deepfake plays from sections 1 and 2. Logistics access, catering, cleaning and equipment vendors as a way into sterile areas. And the transport providers, where driver credentials and vehicle-tracking data quietly reveal protected movements.

Figure 8 — Insider-threat and supply-chain attack tree (USecVisLib).

Defense

The countermeasures are proportionality and discipline. Vet to the level of access, with the deepest screening for the technical, AV, transport and sterile-area roles. Least-privilege physical access with audited escorting. TSCM sweeps of every speaking venue before use, and keep the zone sterile afterward. And put real security requirements on vendors, with continuous monitoring and a backup for anything essential.

9. Physical and CBRN, at the protective-doctrine level

I will keep this at the level a protective detail actually plans against, and ground it again in the record: 1981 in St. Peter’s Square, 1982 at Fátima, the 1995 Bojinka plot.

The vectors to plan for are the close approach by a lone actor at a rope line, the procession or the Popemobile route, an edged or thrown-object threat from inside a permitted crowd; an elevated firing position along the Castellana axis or around the open plazas, which is what sightline management and counter-sniper overwatch exist for; a low-grade chemical or irritant dispersal in the crowd whose real effect is panic and a crush (sections 3 and 4) rather than mass toxicity; and an improvised or vehicle-borne explosive at a venue perimeter or along the route.

Figure 9 — Physical and CBRN-in-crowd attack tree (USecVisLib).

Defense

Against all of that: screened sterile zones with search and magnetometers at controlled entry, counter-sniper and elevated-position domination with the structures surveyed in advance, hostile-vehicle mitigation on every crowd-facing route, CBRN detection and decontamination staged for a mass-casualty contingency, a saturating uniformed and plainclothes presence at the rope lines, and pre-positioned, redundant medical capacity matched to the density map.

10. The convergence scenario

If I have one thesis across this whole series, it is that the defining threat is not any single vector. It is the deliberate sequencing of several of them, fast. Applied to this visit, it reads like this. In the days before, a disinformation campaign (section 1) polarises the public and seeds counter-mobilisation near the venues. At the chosen moment, coordinated cyber (section 6) and RF (section 7) actions degrade CCTV, comms and situational awareness. A drone payload or a staged report (sections 3 and 4) starts a panic at a critical bottleneck. A deepfaked “official” evacuation order (section 2), pushed through compromised signage or PA, turns that panic into a crush. And in the chaos, a primary objective is pursued while a pre-staged false narrative (section 1) claims and frames the event for the world before the authorities can get a word out.

Figure 10 — Convergence scenario as an attack graph: prime, blind, trigger, amplify, exploit, with CVSS-scored vulnerabilities along the chain (USecVisLib).

Defense

No single countermeasure stops that. The only thing that does is an integrated, fast, multi-domain defense built on one shared picture of what is happening: a single fused common operating picture across Casa Real security, Policía Nacional, Guardia Civil, Madrid municipal police, the Vatican Gendarmerie and advance team, and the intelligence services, correlated fast enough to matter. Every per-section defense above feeds into that one picture, because the convergence attack is precisely the one a fragmented, human-speed defense cannot answer.

Conclusion

A papal visit compresses every threat domain into a single televised, open-air, ideologically charged event. The lessons of the SRF-IWS series all apply, but the protectee changes the maths.

The first point is that information is the main battlefield. For a Pope speaking about migration before Parliament and a 1.8 million crowd, the disinformation and deepfake vectors are more likely, and probably more consequential, than any kinetic act. Strategic communications is a security function, full stop.

The second is that the crowd is both the audience and the weapon. You can produce mass casualties in a dense crowd without firing a shot, just by engineering panic. Crowd dynamics deserve the same planning effort as counter-sniper coverage.

The third is convergence. Disinformation that primes, cyber and RF that blind, drones that trigger, deepfakes that amplify, run in sequence and fast. The defense has to be just as integrated and just as fast.

The fourth is that the history is the warning. Attacks on pontiffs are documented fact, not imagination, and planning has to respect that record.

And the last is that speed and unity decide the outcome. A fragmented, human-speed defense cannot answer a coordinated, multi-domain operation. A single shared command picture is the price of entry.

The point of writing all of this down is simple: the defenders, not the adversaries, should be the ones who have thought it through first.

SRF

Follow: @simonroses

This article continues the SRF-IWS research into information warfare strategies applied to high-profile protective environments.